My Adobe Summit 2026 Session Picks for the Optimization Crowd (Target + AJO)

Adobe Summit is coming up fast, and this year will be my 17th or 18th in-person Summit. I’ve been attending since Omniture acquired Offermatica in late 2007…which is, I guess, a way of saying I’ve watched “optimization” evolve from classic A/B testing into a world of decisioning, orchestration, real-time data, and now agentic AI.

If you’re in the Optimization / Personalization space and you’re using Adobe Target and/or Adobe Journey Optimizer (AJO), here are the sessions I’m prioritizing in Las Vegas – and why I think they’re worth your time.

A quick note before we get into it: session rooms fill up, so you’re going to want to book early. Times can change as well, so favorite a few backups.

The shift happening in optimization

For years, optimization success came down to one question:

Can you launch tests fast and measure lift reliably?

That still matters — but the frontier has moved. Today’s leaders are solving for:

- Scale without chaos (more experiences, more audiences, more channels)

- Decisioning instead of “page tests” (real-time choices, ranked offers, contextual logic)

- Operational maturity (governance, QA, risk controls, program visibility)

- Reusable learning (so insights compound instead of disappearing into decks)

- AI that actually ships (not just “AI ideas,” but AI embedded into workflows)

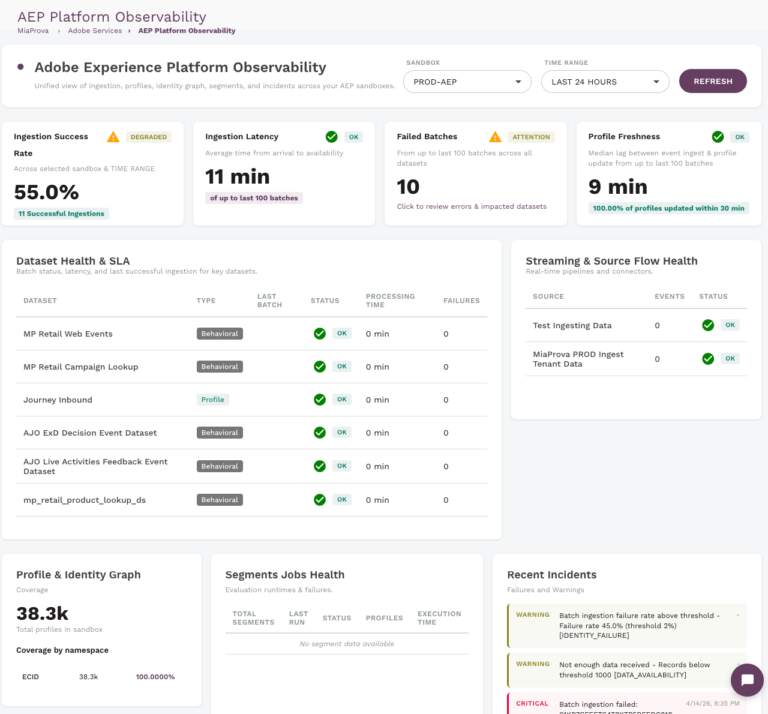

That’s exactly why MiaProva has been expanding beyond “alerts” into a broader layer of program operations and observability for teams running personalization: clearer visibility into what’s live, faster detection when experiences or measurement drift, and better workflow to help scaling efforts stay safe.

The themes I’m chasing this year

When I look at the strongest optimization programs, they’re all converging on the same big three:

- Scale: personalization that doesn’t collapse under its own operational weight

- Decisioning: moving from “test this page” to “choose the best next experience” across channels

- Speed-to-learning: tighter loops from insight → hypothesis → activation → measurement

Every session below ladders into at least one of those.

What I’m most excited about this year is how clearly Summit is reflecting the same shift we’re seeing with customers: optimization teams need more than testing tools…they need an operating layer that keeps things measurable, governable, and scalable. As programs move toward decisioning and agentic workflows, the questions change from “can we build it?” to “can we run it safely, repeatedly, and with shared visibility?” That’s the lane MiaProva is continuing to evolve into.

Monday, April 20

1) Winning Fans with Personalization: Scaling Team Experiences Online [S926]

Mon, Apr 20 — 10:00 AM–11:00 AM PT

This is the type of case study I love: a big brand finds real lift with personalization… and then hits the wall we’ve all hit:

“The proof of concept worked — but it’s too manual to scale.”

What’s interesting here is the move toward a tokenized personalization framework. If you’ve ever tried to scale personalization across dozens (or hundreds) of content permutations, you know the real bottleneck isn’t Target (or AJO)… it’s the operating model and the content/data contract.

What I’ll be listening for:

- How they define tokens (business-owned vs. tech-owned)

- How they prevent token sprawl from becoming “fragmentation with a nicer name”

- Where the “truth” lives: AEP? CMS? a rules service?

- How they operationalize “favorite team” signals (and how they validate them)

If you run Target today, this feels like a blueprint for moving from one-off experiences to a reusable system.

2) AI to Activation: How Microsoft Is Reimagining Marketing at Scale [S916]

Mon, Apr 20 — 11:30 AM–12:30 PM PT

A lot of teams are stuck in “AI inspiration mode” – pilots everywhere, but not much changes day-to-day. Microsoft’s story is usually valuable because they’re forced to answer the hardest question:

How do you drive adoption across different roles, skill sets, and incentives?

Tools don’t scale programs – enablement and governance do.

What I’ll be listening for:

- Their enablement framework (who trains who, how often, what “good” looks like)

- Their measurement model for AI adoption (beyond “people tried it once”)

- How they connect AI-created content to activation workflows (the “last mile” problem)

- Where human review sits (and what they refuse to automate)

Because the future of experimentation isn’t just “better ideas.” It’s faster throughput without losing quality or brand control.

3) Data Mirror and MCP: Modern Connectivity for Customer Journey Analytics [L611]

Mon, Apr 20 — 4:30 PM–6:00 PM PT

This is my “don’t miss” technical session.

Optimization programs are only as good as (1) the data you can trust, and (2) the speed at which you can use it.

If Adobe is making it easier to keep CJA aligned with upstream sources via change data capture, that’s huge for measurement integrity. And if MCP genuinely lowers the barrier for agentic connectivity (less custom API wrangling), that can change how teams work.

What I’ll be listening for:

- How “automatic” Data Mirror really is (and where it still gets brittle)

- Governance + lineage: how changes are tracked and audited

- Real examples of MCP workflows (not just conceptual diagrams)

- How this impacts the handoff between CJA insights → activation in Target/AJO

Who should attend: anyone building (or migrating to) CJA, anyone connecting offline + enterprise data into optimization decisions, and anyone tired of brittle pipelines.

Tuesday, April 21

4) The Future of Experimentation: How Agentic AI is Fueling Smarter Growth [S523]

Tue, Apr 21 — 11:30 AM–12:30 PM PT

If you’ve ever asked:

- “Why did this test win?”

- “What did we learn that we can reuse?”

- “Why do we keep running the same kinds of tests?”

…this session is aiming straight at that pain.

The promise here is compelling: AI-led causal insights, better ideation tied to business KPIs, and adaptive experimentation (iterating variants mid-flight).

My “healthy skepticism” lens:

I’m excited – but I want to see how they avoid:

- black-box “because AI said so” explanations

- teams losing hypothesis discipline

- novelty chasing over fundamentals

What I’ll be listening for:

- How they explain causal drivers in plain language

- How they connect predicted lift to business value (not vanity metrics)

- Guardrails for adaptive changes (brand, legal, QA, experience consistency)

5) AI-Powered Decisioning for Web Experiences in Adobe Journey Optimizer [L610]

Tue, Apr 21 — 1:00 PM–2:30 PM PT

This is the practical “how-to” counterpart to the strategic AI sessions.

If you’re a Target person, think of this as leaning deeper into a decisioning model where you can:

- incorporate real-time context

- apply ranking formulas

- blend model scores with profile + offer attributes

- orchestrate decisions inside journeys

What I’ll be listening for:

- How they design ranking formulas that don’t become impossible to govern

- How they operationalize “external data sources” cleanly

- Patterns for web integration that don’t wreck site performance

- How debugging works when the decision is dynamic and multi-signal

Why it matters:

A lot of orgs are moving from “page tests” to offer + content decisioning, and this is where that becomes real.

6) MLB’s Roadmap to “Sunny and 75”: Scaling Personalized Fan Journeys [S925]

Tue, Apr 21 — 3:00 PM–4:00 PM PT

I’m a sucker for well-told fan personalization stories — but what I really want here is the operational roadmap:

How do you move from linear delivery cycles to agile journey iteration — without chaos?

They’re talking about AJO at scale, real-time engagement across lifecycle moments, and measuring end-to-end with CJA.

What I’ll be listening for:

- How they sequence use cases (what they did first vs. later)

- What “weeks not months” required (process, tooling, org changes)

- Their measurement approach (especially attribution debates)

- How they close the loop from insight → next best action

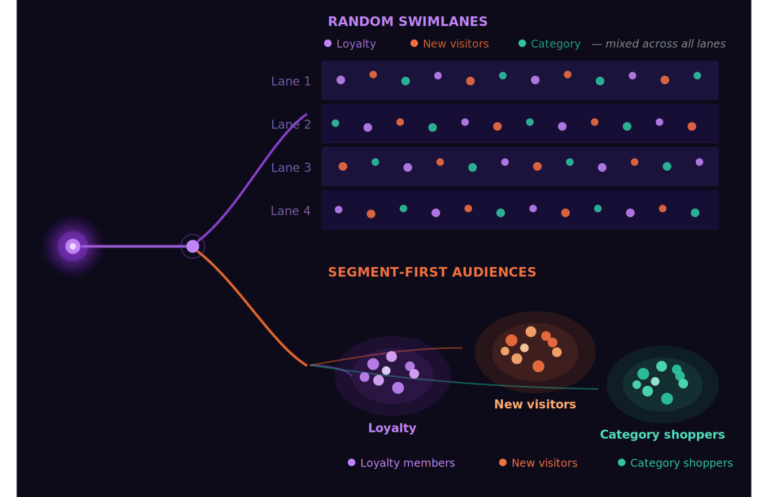

7) Using AI to Build and Activate Audiences in Adobe Real-Time CDP [L515]

Tue, Apr 21 — 4:00 PM–5:30 PM PT

If you’ve been doing personalization long enough, you know audiences are either:

- your biggest accelerator, or

- your slowest bottleneck

This lab is appealing because it’s hands-on: AI-assisted audience workflows, refinement, validation, and activation — with the governance conversation included (which is where most “AI audience” ideas fall apart).

What I’ll be looking to walk away with:

- Practical patterns for AI-assisted audience building (and where humans must review)

- How they recommend validating audience logic

- How teams operationalize privacy + governance without freezing progress

Wednesday, April 22

8) How US Bank Optimizes Customer Engagement with AI Decisioning [S524]

Wed, Apr 22 — 9:00 AM–10:00 AM PT

Financial services tends to be a great proving ground because constraints are real: compliance, risk, governance, and scrutiny. If an AI decisioning approach works there, it’s usually portable.

They’re also calling out A/B testing design for decisioning strategies — which is exactly the type of “real-world hard part” I want more teams talking about.

What I’ll be listening for:

- Their experimentation design around decisioning (control strategy, holdouts, etc.)

- Organizational learnings: what broke, what stuck, what surprised them

- Roadmap items that will matter for enterprise teams (not just demos)

9) Building NFL Engagement with Optimization Best Practices in Adobe Target [S527]

Wed, Apr 22 — 10:30 AM–11:30 AM PT

This is the “Target people, get in here” session.

Optimization best practices are timeless, but I’m especially interested in how they:

- prioritize

- translate reporting into the next wave of tests

- selectively introduce advanced capabilities without overcomplicating the program

What I’ll be listening for:

- Their prioritization model (and what they stopped doing)

- How they structure learnings so they compound over time

- How they connect “fan intent” to test strategy (not just design tweaks)

- Practical program mechanics: intake, QA, governance, velocity

If you’re running Target today and want to leave Summit with immediately usable ideas, this is a strong bet.

10) Experiment and Optimize Faster with Adobe Journey Optimizer [L536]

Wed, Apr 22 — 1:30 PM–3:00 PM PT

This one ties the whole week together for me: AI-led experimentation lifecycle acceleration, plus unified measurement across Adobe Analytics and CJA, plus the operational layer (test catalogs, performance views, agent-driven summaries).

This is where I expect the “how do we scale the program?” conversation to get very real.

What I’ll be listening for:

- How they normalize measurement between Analytics and CJA

- How “agent-driven workflows” are implemented (and what teams need to support them)

- How they manage experiment catalogs so learnings don’t get lost

- Where this complements Target vs. replaces workflows vs. creates new ones

Let’s connect

If you’re attending Summit in Las Vegas and working in optimization, personalization, Adobe Target, AJO, RT-CDP, or CJA, I’d genuinely love to connect in person. I always learn more from hallway conversations than I do from any slide deck.

Feel free to:

- connect with me on LinkedIn, or

- reach out directly if you want to compare notes, talk shop, or meet up between sessions.

Adswerve will also be hosting some events that I’ll share once they’re finalized. See you in Vegas and safe travels.